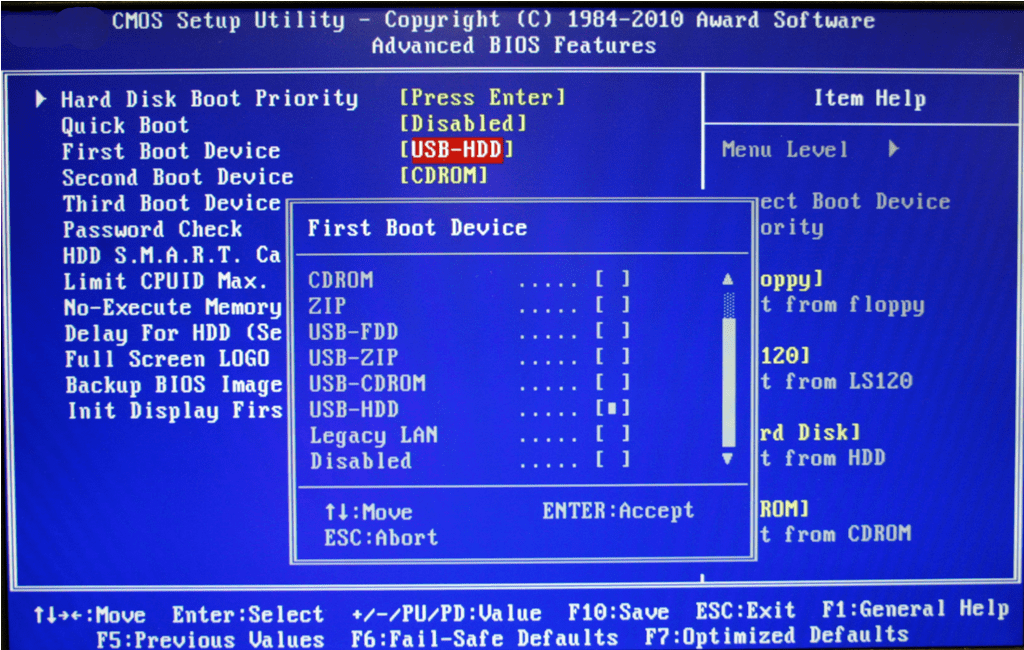

dev/md127: clean, 66951/655360 files, 1866042/2619136 blocksĪfter rebooting back into the hypervisor, the CVM came up normally. Pass 5: Checking group summary information Pass 1: Checking inodes, blocks, and sizes dev/md125 has gone 230 days without being checked, check forced. As an added check, run fsck on the volumes ~]# fsck /dev/md125.Mdadm: re-added ~]# mdadm /dev/md127 -a /dev/sdg1 Rejoin the devices back into the RAID1 mirror and let them resync ~]# mdadm /dev/md126 -a /dev/sdg3.Set the devices I needed to modify back to writable mode ~]# mdadm -readwrite ~]# mdadm -readwrite ~]# cat /proc/mdstat.The 4th partition is used for NFS in the CVM (ie. I could see the RAID devices probed as sdb and sdg, with partitions 1, 2, 3 configured but only partition 2 correctly in sync. Md127 : active (auto-read-only) raid1 sdb1 Md126 : active (auto-read-only) raid1 sdb3 Md125 : active (auto-read-only) raid1 sdg2 sdb2 Loop0 7:0 0 632.2M 1 loop /run/archiso/sfs/airootfs cd/dvd ATEN Virtual CDROM YS0J ~]# lsblk

In my case, 2 of the software RAID devices had lost sync. The CVM boots off software RAID devices using the first 3 partitions of the SSDs. The hypervisor boots the CVM which has a SAS device driver (mpt3sas), therefore all disk access is done through the CVM. mpt3sas version 14.101.00.00 unloadingĪs it occurs before the networking has started and gets reset by the hypervisor, I do not have any way of interacting with the VM.Īfter much mucking around, I was finally able to boot a System Rescue CD which had access to the RAID disks so I could fix it.įYI - the hypervisor boots from the SATADOM but it does not have a device driver for the SAS HBA device so it cannot normally see the storage disks. Modprobe: remove 'virtio_pci': No such file or directory md127: detected capacity change from 42915069952 to 0 md126: detected capacity change from 10727981056 to 0

md125: detected capacity change from 10727981056 to 0 md124: detected capacity change from 42915069952 to 0 md123: detected capacity change from 10727981056 to 0 Svmboot: error: too many partitions with valid cvm_uuid: /dev/md123 /dev/md125 Svmboot: Checking /dev/nvme?*p?* for /.nutanix_active_svm_partition Svmboot: Appropriate boot partition with /.cvm_uuid at /dev/md125 EXT4-fs (md125): mounted filesystem with ordered data mode. Svmboot: Appropriate boot partition with /.cvm_uuid at /dev/md123 EXT4-fs (md123): mounted filesystem with ordered data mode. Svmboot: Checking /dev/md123 for /.nutanix_active_svm_partition Svmboot: Checking /dev/md for /.nutanix_active_svm_partition Mdadm: /dev/md123 has been started with 1 drive (out of 2). md123: detected capacity change from 0 to 10727981056 md/raid1:md123: active with 1 out of 2 mirrors Mdadm: /dev/md/phoenix:0 exists - ignoring Mdadm: /dev/md124 has been started with 1 drive (out of 2). md124: detected capacity change from 0 to 42915069952 md/raid1:md124: active with 1 out of 2 mirrors Mdadm: /dev/md/phoenix:2 exists - ignoring Mdadm: /dev/md/phoenix:0 has been started with 1 drive (out of 2). md125: detected capacity change from 0 to 10727981056 md/raid1:md125: active with 1 out of 2 mirrors Mdadm: ignoring /dev/sdb1 as it reports /dev/sda1 as failed

Mdadm: /dev/md/phoenix:1 has been started with 2 drives. md126: detected capacity change from 0 to 10727981056 md/raid1:md126: active with 2 out of 2 mirrors Mdadm: /dev/md/phoenix:2 has been started with 1 drive (out of 2). md127: detected capacity change from 0 to 42915069952 md/raid1:md127: active with 1 out of 2 mirrors Mdadm: ignoring /dev/sdb3 as it reports /dev/sda3 as failed Mdadm main: failed to get exclusive lock on mapfile The most relevant sections of the log (copious kernel taint messages removed) are sd 2:0:3:0: Attached SCSI disk This repeats a few times before the hypervisor resets it and it starts booting again. I found the console output in /tmp/.0 and I can see it trying to enable RAID devices, scan for a uuid marker and find 2 of them, then abort and unload the mpt3sas kernel module before trying again in 5 seconds. This is on a semi-retired production cluster (not CE) that has no workloads running on it. I have a problem with a CVM that won’t boot. X-Ray Performance & Reliability Tests 22.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed